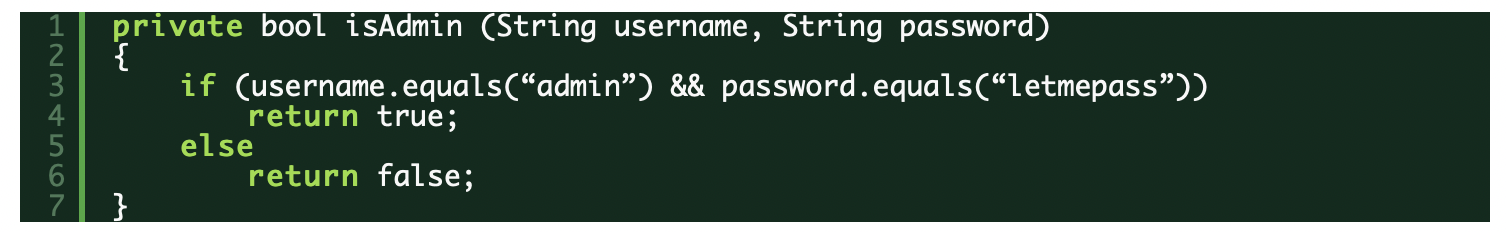

Hard-coded secrets include any type of sensitive information, such as usernames, passwords, SSH keys, and access tokens. They can be easily leaked to an attacker if an application’s source or configuration contains them. To help understand why this happens, consider the following Java code snippet:

A developer might insert such a block of code for local testing purposes and forget to remove it. When this Java source code is compiled, the resulting executable JAR file will contain the strings “admin” and “letmepass.” Wherever this executable is placed, whether downloaded into a mobile device through app stores, deployed into a server, or dropped into any type of system, the strings can be scraped if a hacker gets access to their underlying storage.

Often, the easiest route to gain access to records is simply to log into a system using these easily obtained leaked passwords or access tokens.

Seeing the scope of the problem of leaked hard-coded secrets

Secrets leak often. Here are just a few recently reported large-scale incidents.

- In this August 2, 2022 article, it was revealed that Twitter API keys were leaked through 3,207 mobile apps. This type of leak allows an attacker to access various categories of sensitive information, including direct messages sent between Twitter users through associated apps.

- On September 1, 2022, Symantec published an article showing 1,859 apps (iOS and Android) contained AWS tokens, 77% of which allowed access to private AWS cloud services, and 47% allowed access to files, often in the millions, in S3 buckets.

- On September 15, 2022, Toyota noticed it had inadvertently leaked keys in source code that it uploaded to GitHub, which allowed an attacker access to 296,019 customer records with email addresses. Upon discovering the leak, Toyota immediately notified affected customers to be careful of phishing attacks.

- One of the most high-profile data breaches was Target, which had 40 million of its shoppers’ credit and debit card records stolen. As a result, at least five lawsuits were filed seeking millions of dollars in damages across several states, and Target’s sales dropped by up to 4% compared to the prior year period. To minimise damage, the store offered a 10% discount and free credit monitoring services to affected customers. When the dust finally settled few years later, Target ended up spending US$202 million in legal fees and damages, according to The New York Times.

A burning question at this point is, “Why didn’t these companies catch the leaks before they put the software into production or a public GitHub repo?” If steps and mechanisms had been put into place to detect embedded secrets—scanning developers’ code within the IDE, during pull or merge requests, or in nightly scans — it’s quite likely that the leaks would have been caught. Doing this earlier in DevOps workflows helps to ensure that secrets are not pushed downstream and helps to reduce the cost and delays associated with remediation.

Understanding the types of hard-coded secrets

There are various types of hard-coded secrets, including usernames, passwords, keys, and access tokens. Let’s explore the details and implications of some of these types of secrets.

Passwords

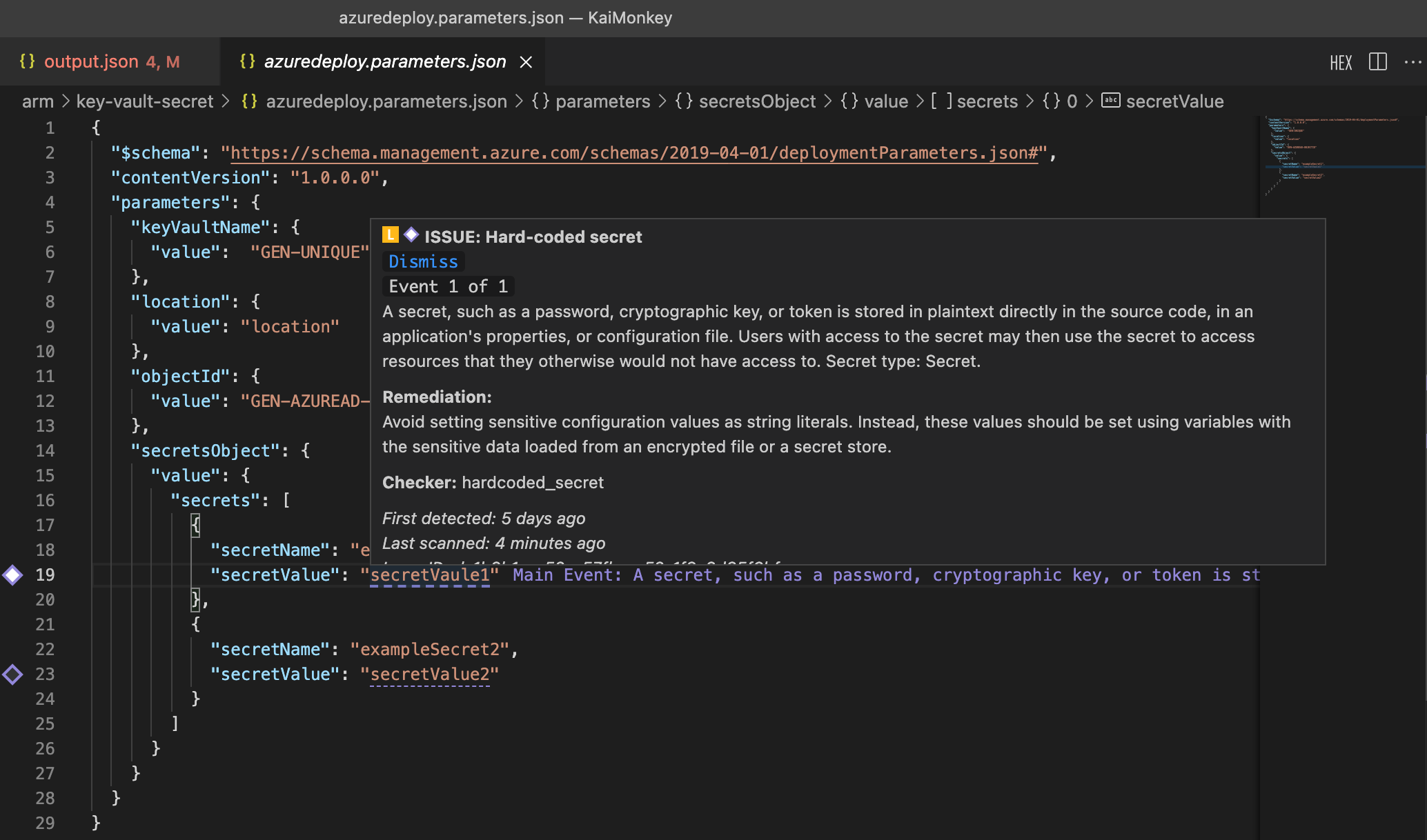

Explicit password strings within source code in variable assignments, string comparisons, or for any type of manipulation in code will cause them to leak in the final executable or app. Passwords in infrastructure-as-code (IaC) configuration files, scripts, and other locations are also security risks because the underlying storage can potentially be accessed by a determined attacker, through vulnerabilities in open source components, unsecured source code, or other methods.

Figure 1: Synopsys Code Sight in VS Code detecting a hard-coded password inside an IaC config file

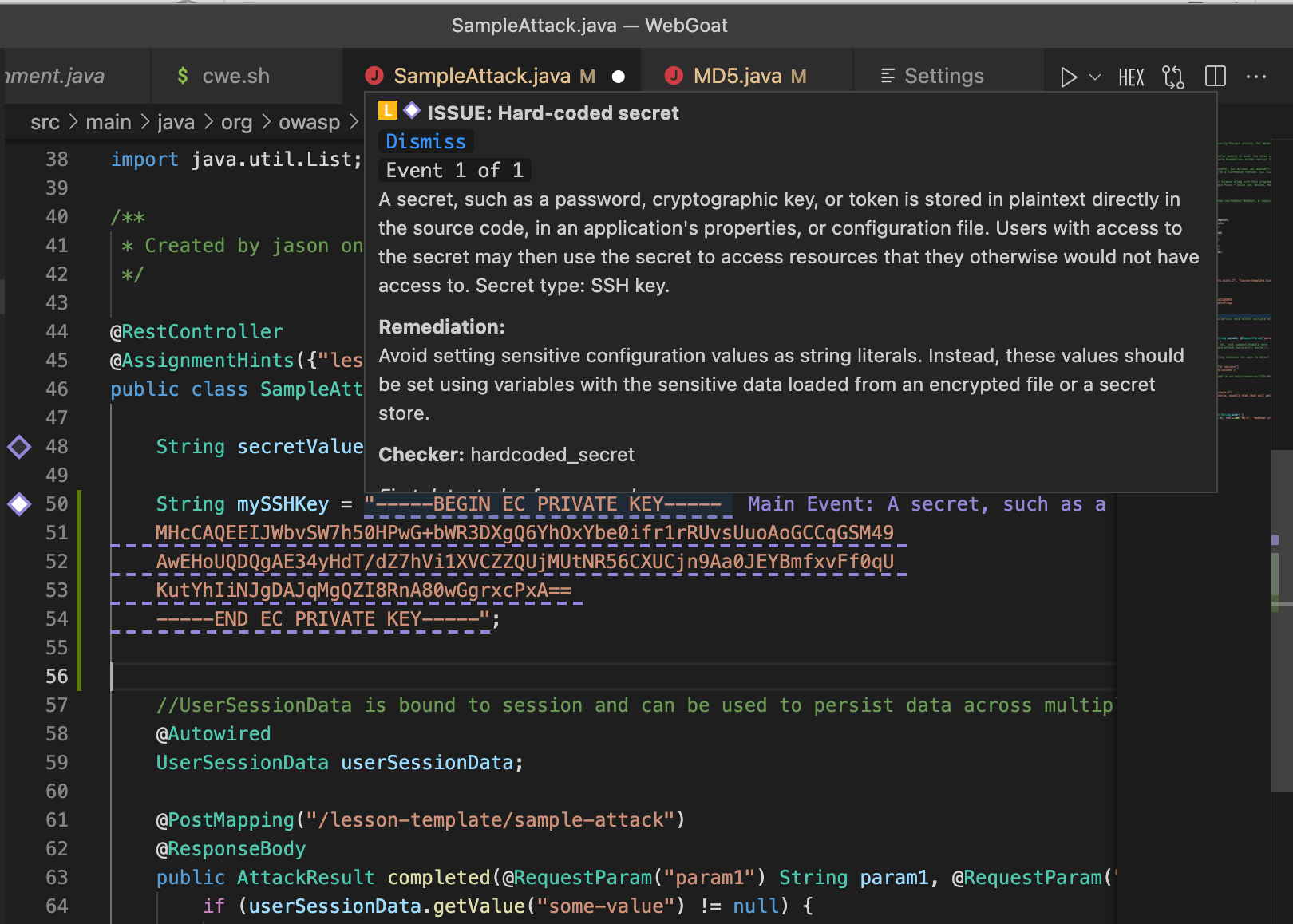

SSH keys

SSH keys are widely used to authenticate users to systems (and systems to users) via public key cryptography. The broader SSH protocol has strong encryption to protect traffic and is commonly used on the internet for system access and file transfer. Leaking admin SSH keys could mean allowing an attacker full access to that system.

This example provides some context into how an SSH key could be revealed within source code.

Figure 2: Synopsys Code Sight in VS Code detecting a leaked SSH key in a Java source fileAccess tokens

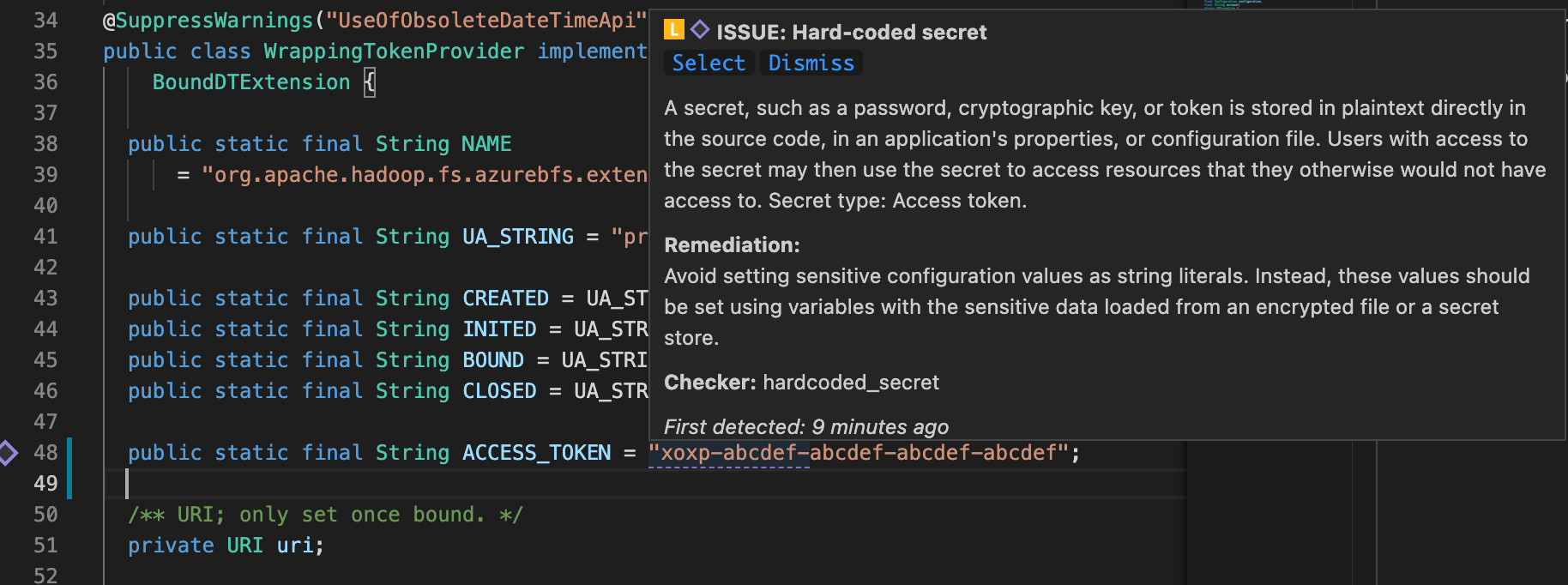

Access tokens are often used in API, HTTP, and RPC calls on the internet to authorise access to a service on behalf of a user. The token contains credentials and other pertinent information (e.g., the resources being accessed) and as such, is a secret. On the server side, if a resource or service for a user is allowed for a given token and that particular token is presented in a request, the call will succeed. It’s not hard to envision how a hacker could leverage access tokens embedded in source code to propagate an attack.

Figure 3: Synopsys Code Sight in VS Code detecting a leaked access token in a Java source file

Minimising false positives when detecting embedded secrets

Source code often contains many hard-coded values that are used during testing and that do not make their way into the shipped product. Because such test code is not included in the shipped product, issues reported in these sections of the codebase are generally considered false positives. It’s important to define the context in which the presence of a secret within source code may not manifest a risk in production. For example, security, DevOps, and engineering teams can configure Rapid Scan Static to explicitly include or exclude specific files or directories for scanning.

To increase accuracy, Rapid Scan Static does not rely only on regex pattern matching to detect hard-coded secrets. Certain secrets require semantic understanding of variables, values, and other context in source language and configuration files in order to detect them without false exclusion from analysis. The approach that Synopsys takes avoids the up-front work of specifying any regex pattern or configuration. This simplifies secrets detection and eliminates potential points of failure due to misconfiguration or deviation from established patterns. Conversely, regex-only solutions can be noisy when pattern matching is the only technique.

Looking for secrets

Secrets are typically introduced by individuals writing source code or configuration files, but there are many stages in the software development life cycle (SDLC) where they can be inadvertently added as well. For example, during a deployment build or while deploying to a staging environment for final test, an automation script may inadvertently copy a set of files that contains secrets. It’s important to account for these late-stage scenarios before final deployment into production.

When, or in what context, is it optimal to detect embedded secrets? It’s best to minimise the risk of pushing secrets downstream. As such, detecting secrets while working inside the IDE can both minimise the risk of publishing secrets as well as reduce the remediation effort. Late-stage detection creates more work for everyone, including for yourself as you close out tickets and make additional commits.